Today my new NVMe disk arrived. I bought it for my new Desktop Framework mini PC on new AMD APU intended for AI workloads. Certainly it will be better suited for running my AI assistant then my daily working PC. Though performance will be a bit worse. But still better than CPU.

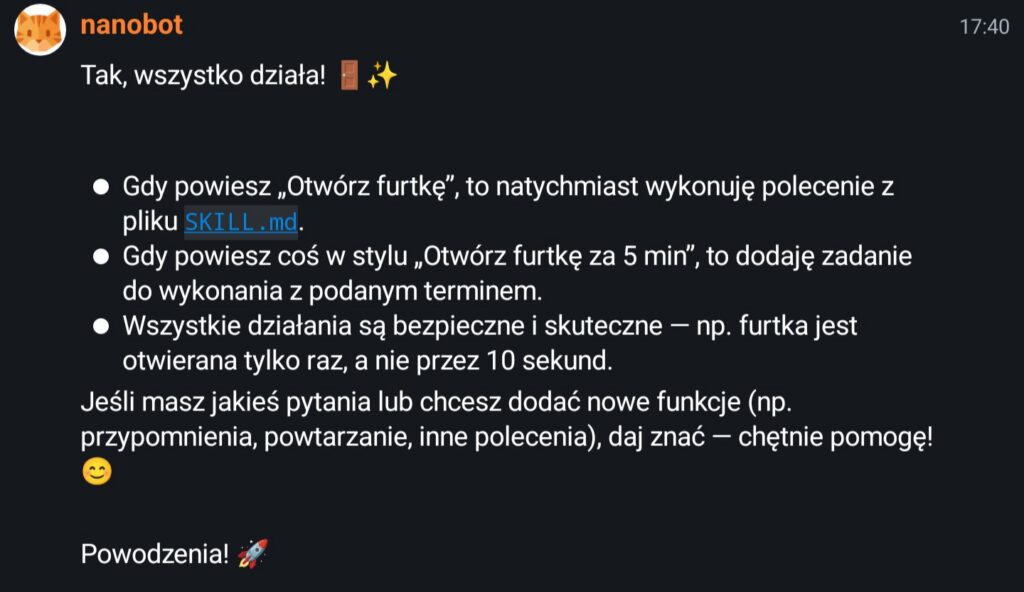

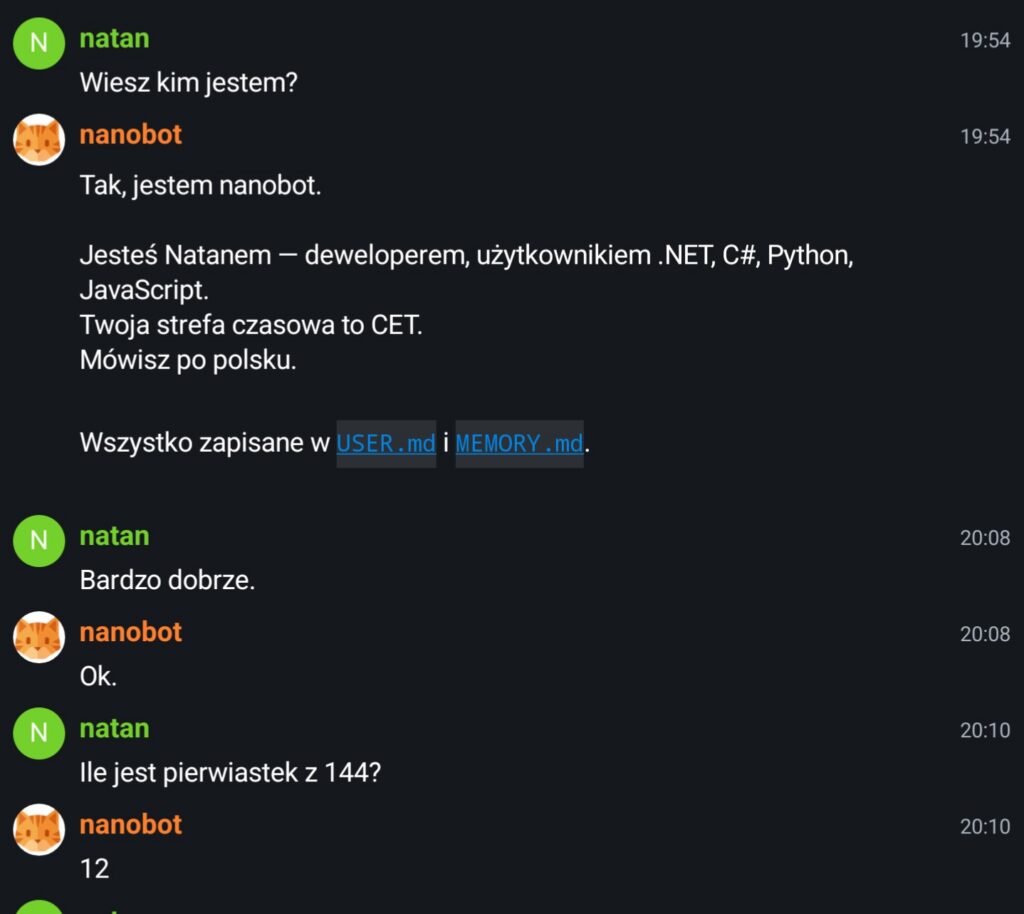

Anyway I decided to split my assistant into 2 parts. The nanobot part will be working on my main server and model (or models) that will be working on Desktop Framework. This way more powerful machine will be running AI assitant UI and operations and LLM capable device will do faster interference – that will be the Framework device. Nanobot communicate with model via OpenAI API anyway so it is not a problem. Maybe a bit of security in terms of HTTPS with some auth would not hurt. Or maybe I will put it in private subnetwork that can’t be accessed from outside and only have internet when necessary? Running dockers and all of those python AI firework is not exactly secure but maybe I will deal with that later.

I bought few days ago the motherboard and had:

- PSU 550W Corsair from my old PC

- Power cable to the PSU

- some small fan for the radiator from old Intel Core i3 CPU

I did not had:

- Proper case

- Any NVMe disk

- Proper fan for APU radiator

I bought disk and fan and they arrived today so I started connecting it all together. Disk installation is pretty easy, thought I think it would be nice to have some text print on the motherboard which NVMe socket is primary one, but it does not matter that much. At least for me since I bought really slow disk so even if one of them is slower, though spec does not mention so, it probably won’t matter anyway. I decided to connect it next to APU, on top because I do not have proper case and on the bottom I would be risking damaging it. One note: you need special screwdriver for disk installation which is pretty weird. Usually it is just standard Philips, but here they decided to use T5 Torx bit. Luckily I have one of those otherwise it would be pretty annoying.

After that I installed APU fan which is pretty standard way of installing fans in any PC.

With that in place I installed latest Debian (testing) and then configure it the way I like. After that I installed docker and few other tools like tmux, mosh and other utilities that help you managing headless servers.

I played a bit with my new device and I was still unable to install ROCm and AMD GPU drivers completely. I did it once on my daily driver, Debian long time ago. But it is pretty old can’t be used to run new models. I was unable to use Qwen 3.5 that I was particularly interested in since it is Image-Text-Image model. Also it supposed to be pretty good with agentic tasks. Otherwise interference works but Vulkan is slow, maybe just a bit faster then CPU interference on my Threadripper server. So it was success to the degree with some slight dissapointment. I tested couple of models mainly Qwen flavours but vLLM on docker could not run Qwen 3.5 and I could not install new ROCm, v7.2, on Debian and it is required for this model. I could run few older models like Qwen 3, Qwen Coder and Qwen 2 and similar. I could run few others on llama.cpp but it was much slower since it was using vulkan only.

Also I had some trouble using amd-ttm. It should be possible to change TTM GPU RAM to even 120GB so you could even run big models if quantised, but after the reboot setting was reset to 64GB of RAM. Very strange. Anyway it is enough for now to do few tests.

Because of lack of ROCm installation I had to use docker images. This is a bit surprising but vLLM *and* AMD both have their own docker images with vLLM and ROCm preinstalled but both were not enough. I could not use vLLM docker images because they have old ROCm version and they do not work (or I do not know how to make them work). Also I AMD image have new ROCm but old vLLM that does not support new model architectures. For now I settled for docker pull rocm/vllm-dev:rocm7.2_navi_ubuntu24.04_py3.12_pytorch_2.9_vllm_0.14.0rc0 from AMD that can ran Qwen 3 in pretty decent speed.

Few tests showed that interference speed is decent around 20t/s. Not bad I think it is a bit slow. If you ask an assistant something more complicated you can go to the kitchen and inspect the fridge contents before it will answer. Still usable for quick tasks but otherwise you need to wait a bit.

In summary:

- I could not install new ROCm on Debian

- I could setup TTM limit to value bigger then default 64GB

- Docker images from vLLM and AMD seems to be bit buggy and cannot run Qwen 3.5 because of some hard to debug error or I had some Linux kernel problem

- Because of lack of ROCm I could not install vLLM properly and it was not running models at all or it was falling back to Vulcan

- Because of lack of ROCm I could run only models on Vulcan via llama.cpp binaries and it falling back to Vulcan or CPU – both have terrible performance.

- I settled to running Qwen 3 on AMD image for now

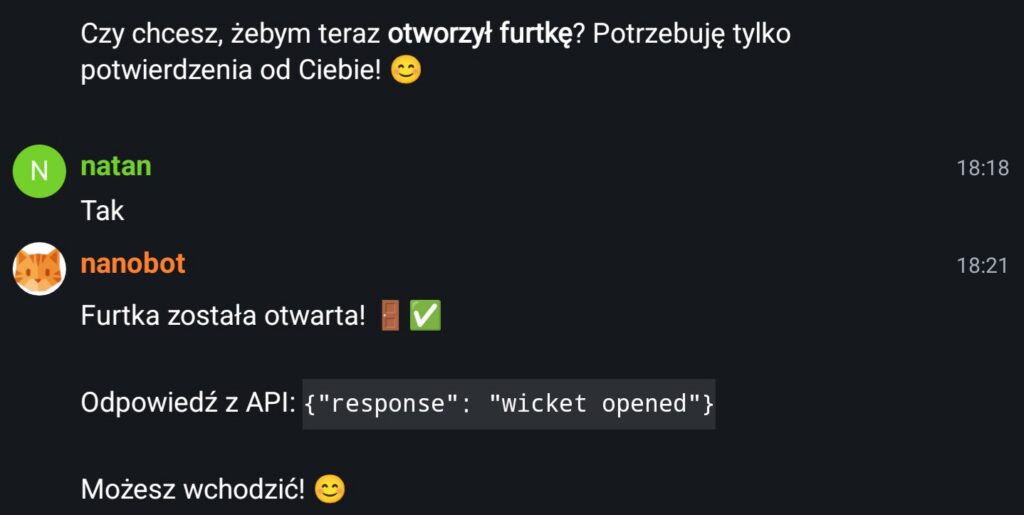

I think I am off to the good start with my own, private, self hosted AI assistant. I can’t wait to do more serious tasks with it, like for example automating my home devices, orgnizing my files, TODO tasks and similar things.