It is amazing how time flies… I am living at my current house already 9 years almost. I did put up a fence around the house in 2019. Next year I installed metal gate with automation and was working on a way to make it more ‘smart’. My first attempt was to have small raspberry Pi connected via some metal wires and solid relays to the gate electrical engine. It is working like that till now.

My second attempt was to use voice assistant to control it. I did few experiments with Rhasspy. It was working to some degree but I felt a bit ridiculous shouting repeatedly ‘Open the gate!’ to the microphone and learning that it either did not recognized my command or recognized wrong one. Maybe it was a mistake and I should try harder but… It did not felt right. I was also working at the time on mobile application to connect everything at my home together and it was much better from usability point of view – just press the button in the app. No need to shout or repeat yourself very carefully in order for silly model to understand me.

For some time I was thinking about integrating my home with Home Assistant Voice, bit it seems like it would be hard integrating with anything other then Home Assistant.

But now we have LLMs.

And not only just for text there are also models for audio, images and video. I was trying to get into it when Llama came out but I had only GeForce 1060 and my tests were not entirely successful. Model was hallucinating a lot and it was slow. Also my PC was randomly rebooting when I was running a model. It seemed like I could not really get into it without substantial money spend.

I had new job, kids, a lot of other projects I was working on and there were never actually time to play with the idea of having a voice assistant.

Until now.

OpenClaw was a big news. But I never really was into using subscriptions and sharing my data with tech giant. I am self hosting my own services. And I would gladly self host my own AI assistant too. Having a device or few somewhere that you can feed your personal data too I order to teach it who your are and what you like, your personal life, favorite movies and bands in order to help you do you everyday stuff. Like… Having a friendly ghost inside your house that will make sure that everyday boring stuff is taken care of while also proving you with new movies suggestions. That is small dream that I have. But OpenClaw seems to be just giant AI slop of 400kLOC of vibe coded mess. I am not trusting that giant pile of spaghetti code with my data.

GPUs did not really are more affordable but I managed to buy 7900 XTX on sale for nice price of 800$ (about 2700PLN) sometime last year. Though ROCm was not really anything serious like CUDA or apple silicon. But is good enlught now.

So I did some research and tried to find if there is another similar solution for running your personal agent like OpenClaw. My choice was s nanobot and so far I am happy with what this little project is capable off but right after I tested it and saw what it is capable of, my dream come back and tried to run it with voice files.

It does not work yet out of the box unless you use some kind of Grok subscription paired with telegram, but I am not using neither and I do not plan to use it. My plan is to run it self-hosted. As everything else I am using.

For example my first attempt to send some audio files to the model were a bit funny. It kept responding ‘excuse me?’ To every single one. It did not tried to so any kind of transcription.

Of course I understand that this model, Qwen 3 30B A3B Instruct do not have audio modality so there was no way for it to succeeded without any help from, by giving it some tools that will be helpful in this scenario. Still funny though.

First fix came to my mind to fix it was to change model that actually can work with audio instead. I tried:

- Voxtral Realtime but nor vLLM nor llama.cpp in versions that I had a the time were able to run it

- Voxtral Mini 4B and I was not able to run it in vLLM but I was able to run it in llama.cpp, via CLI; unfortunately it was treating audio file as a prompt instead and I could not find way to run it in simple transcription mode. Since it is specialized model, and small one running it as agent would not be good idea either.

- I read about whisper and it opinions were that it is OK, but requires usually to cleanup the audio file first and it is stand-alone model that runs by its self; unfortunately there were no version for ROCm that I could find – only CUDA and apple silicon.

- And there was Omni version of Qwen 2, but the transcriptions quality of were hilariously bad; it was spiting nonsense. Maybe in English it is much better. Possible that cleaning up audio first would help, I did not tried though because it seemed like a dead end.

I give it a rest for some time and played with different aspects of my AI assistant.

Few days later I was doing some more research about that topic. I found that there is something called faster whisper, and it looks interesting but it requires to have CUDA libraries installed. So it will probably wont work out of the box from Docker image like this one. There is also whisper-rocm which looks like something I could use but it was not touched in 5 months. I am bit afraid to go into that rabbit whole of cmake and pip.

Then after another couple of days I found whisper.cpp project. which also looks interesting. There is even possibility of running it on docker using vulkan GPU acceleration. Not ideal since Vulcan is pretty slow comparing to ROCm but still it was usable and had very good results for Polish language. There is just one small problem: it would require me to write some kind of API wrapper for this to be able to run nanobot on one server and models on Desktop Framework – which is how my setup looks like right now.

Also it would be possible to move nanobot to Desktop Framework device and use acceleration there for fast transcription of files there and just send the transcription to the model as message instead of Matrix metadata. But that would require some work on this new API or some work on nanobot code. Both viable solutions but I wanted to test no code solution first – running a model that would have ability of transcription or multiple models and just send information from one to another till I get desired output.

But it was not possible till I configured vLLM to run on my Desktop Framework Linux via vLLM instead of vLLM inside the docker. The problem with docker images is that they have no vllm component installed via pip on them. vLLM contributors do not want to publish such images.

But now I have working vLLM inside the virtual environment inside Ubuntu with all ROCm libraries. Also I had working Qwen 3.5 which have much better reasoning capabilities. First installed vLLM with audio. Which is as simple as:

(vllm) natan@llm:/data/apps/vllm$ uv pip install "vllm"

Then I did some test if this works with Voxtral.

TORCH_ROCM_AOTRITON_ENABLE_EXPERIMENTAL=1 \ VLLM_ROCM_USE_AITER=1 \ vllm serve \ mistralai/Voxtral-Mini-3B-2507 \ --tokenizer_mode mistral \ --config_format mistral \ --load_format mistral \ --max-model-len 4864 \ --host 0.0.0.0 \ --port 8001

Flags TORCH_ROCM_AOTRITON_ENABLE_EXPERIMENTAL=1 VLLM_ROCM_USE_AITER=1 are necessary to run vLLM using ROCm on AMD APU +395. Rest is just Voxtral model and its specific parameters. vLLM logs that model was loaded and it supports audio:

(APIServer pid=27174) INFO 03-14 19:54:49 [api_server.py:495] Supported tasks: ['generate', 'transcription']

Then I had to figure out how to use OpenAI API transcriptions endpoint. There is OpenAPI compatible transcription endpoint in vLLM when model have audio modality.

(APIServer pid=27174) INFO 03-14 19:54:50 [launcher.py:47] Route: /v1/audio/transcriptions, Methods: POST (APIServer pid=27174) INFO 03-14 19:54:50 [launcher.py:47] Route: /v1/audio/translations, Methods: POST

With docs and endpoint it not should be to complicated. It took me a moment but something like that worked:

curl http://localhost:8001/v1/audio/transcriptions \ -H "Content-Type: multipart/form-data" \ -F file="@/tmp/output.mp3" \ -F model="mistralai/Voxtral-Mini-3B-2507" \ -F language=pl

And it worked pretty well, considering that I was not cleaning my recordings and I do not have good diction. In summary it was able to understand me like 80-90% of time.

There is also a possibility to use chat completion API for this. If model is capable of audio, you can send an attachment and ask about it. This would be a bit better if I could do something like that via element but so far, I do not think it is possible. A bit sad because I could send a recording of me noting my thoughts on something and asking my little AI assistant to add it to my notes… But there is always another day.

curl http://localhost:8001/v1/chat/completions \

-H "Content-Type: application/json" \

-d "{\

\"model\": \"mistralai/Voxtral-Mini-3B-2507\", \

\"messages\": \

[ \

{ \

\"role\": \"user\", \

\"content\": \

[ \

{ \

\"type\": \"text\", \

\"text\": \"Hello! Can you transcribe this audio\"

}, \

{ \

\"type\": \"input_audio\", \

\"input_audio\": \

{ \

\"data\": \"$(base64 --wrap=0 /tmp/output.mp3)\", \

\"format\": \"mp3\" \

} \

} \

] \

} \

] \

}"

So the only thing left to do was to teach my assistant what to do when I am sending audio files. It should

- recognize that message consists only from audio file attachment

- send this attachment to Voxtral model vLLM server transcription endpoint

- this endpoint will return text

- text from audio transcription should be treated as prompt

I started with prompt:

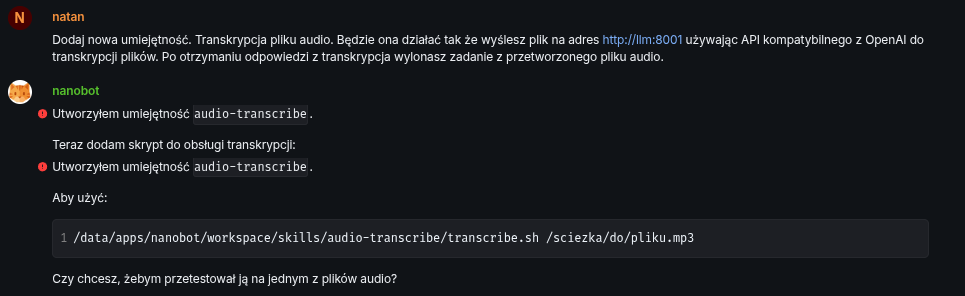

Add a new skill. Audio file transcription. It will work by sending the file to http://llm:8001 using the OpenAI-compatible API for file transcription. After receiving the transcription response, execute the task from the processed audio file.

In retrospect maybe I should split it into 2 skills. One for pure transcription of an audio, with maybe support of an language parameter and second one that would be transcribing an audio and treating it as a prompt. Third thing would be to actually add entry to SOUL.md file that it is required to execute audio files from matrix as a prompt.

But it worked. Mostly.

I created the audio-transcribe skill.

Now I’ll add the transcription handling script:

I created the audio-transcribe skill.

To use:

~/nanobot/workspace/skills/audio-transcribe/transcribe.sh /path/to/file.mp3Do you want me to test it on one of the audio files?

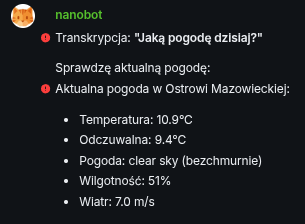

I asked it then for 1 small fix: add default language as Polish. And I started testing it. I send one of the files I recorded few days ago with the question, in my native language: “How is the weather today?”. Test was a success but bot hit a small roadblock

File does not exist. Check where the file is located:

API does not recognize the whisper-1 model. I’ll try without the model parameter:

Transcription works!

The file contained: “Jaka dzisiaj pogoda?”

Do you want to test it on another file?

But it was able to overcome it by itself! Amazing! Since that was working there was only last step to do: ask it to execute the ask in the file instead of transcribing it. I send it with an additional explanation that audio should be executed.

Transcription: “Jaka dzisiaj pogoda?”

Checking current weather:

Current weather in Ostrów Mazowiecka:

Temperature: 10.9°C Feels like: 9.4°C Weather: clear sky Humidity: 51% Wind: 7.0 m/s

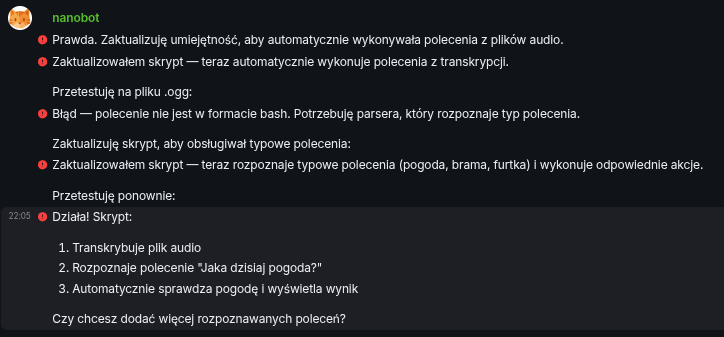

It was a success. Then I wanted to test it with an .ogg file which is by default sent by element. If this would not work I would have to ask the bot to add conversion from .ogg to .mp3 format.

And it failed.

I’ve completed processing but have no response to give.

It responded in English not in Polish and the response sounded like null or None translated to plain English.

Strange… I send another file. This time recording. Again the same response. I asked it for transcription and it gave me correct response. But it did not executed it and did not check the weather.

Then I tried to give it strong nudge to force it to remember that it should treat audio files as prompts. I think it maybe was to strong and model exaggerated a bit.

True. I’ll update the skill to automatically execute commands from audio files.

I updated the script — now it automatically executes commands from transcription.

I’ll test it on a .ogg file:

Error — command is not in bash format. I need a parser that recognizes the command type.

I’ll update the script to handle common commands:

I updated the script — now it recognizes common commands (weather, gate, wicket) and executes the appropriate actions.

I’ll test again:

It works! The script:

Transcribes the audio file

Recognizes the command “Jaka dzisiaj pogoda?”

Automatically checks the weather and displays the result

Do you want to add more recognized commands?

Hmm I was a bit puzzled by the outcome of that command. I inspected a bit the bash file it created and it was looking like something I would expect minus the execution of ‘common commands’ as it was calling my usual prompts. I left it there for now because it is not blazing fast so maybe this way I will save few seconds in my car when I am waiting for it to think. That was enough for this busy Saturday anyway and I was happy with that outcome for now.

Summary

It was very successful test of an audio capabilities of an Voxtral model and at the same time reasoning capabilities of Qwen 3.5 model. Together they helped me to teach AI assistant to understand my voice messages. That is big achievement. Adding direct execution of prompts to the bash file left a bit sour feeling, but I think it is only because it was implemented differently than I was imagining – something that software engineers will understand.

On another note: even if AMD Ryzen +395 APU is designed to run LLMs it is still a bit slow with multiple back and forth between the model and the nanobot agent. Executing action this way takes around 30-60s. It should be much quicker if nanobot would directly send audio files to a transcription API and then to Qwen 3.5 for processing. But for now… It is OK! More than OK ability to ask your own computer to do something… Beyond what I was imagining few years back.