I did make mistake installing my favorite Debian Linux distribution on Desktop Framework PC I am using to run my LLM models. Probably some hacking during installing vLLM and all libraries and drivers and I would be able to make it work. But is it really worth it? I think it was not. So I switched to Ubuntu and I am running Qwen 3.5 second day now.

For now I can say that I am very happy. It seems to be able to reason much better than its predecessor Qwen 3. It have pretty good vision capabilities. It is able to fix its own mistakes, which makes its usage much easier.

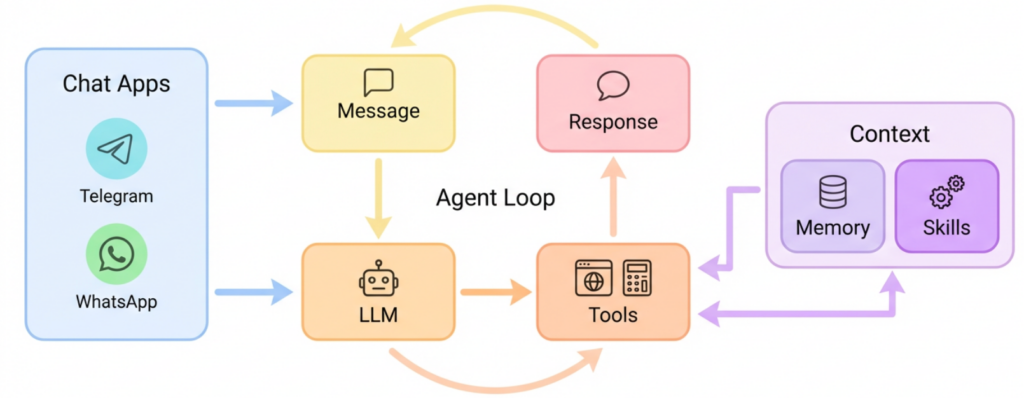

Agent Loop

I do not know why but I had some weird problems with running Qwen as model for an agent with loops and tool usage. It was working to some degree but I had to do change some parameters.

Tool calls only worked with:

vllm serve --tool-call-parser=llama3_json

Without it was sending message to Matrix:

<tool_call>

{"name": "cron", "arguments": {"action": "add", "message": "Przekaż prognozę pogody dla Ostrów Mazowiecka na jutro rano.", "at": "2026-03-14T07:00:00", "deliver": true, "to": "8281248569", "channel": "matrix"}}

</tool_call>

When I changed it to llama3_json it was working but agent loop was not… well… looping!

And this loop is kinda whole point of agents.

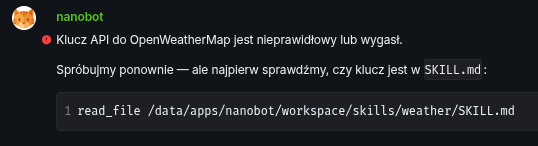

For example I asked it to check the weather after configuring it with a new skill before, with all the explanation how to do that, with all the information it need to call the API… it worked at first but then it forgot:

The API key for OpenWeatherMap is invalid or expired.

Let’s try again — but first let’s check if the key is in SKILL.md:

And then nothing…

And nothing…

It was not doing anything. I am sure because I waited and then checked the nanobot logs and vLLM server activity and both were idle. Again I think I did not broke it. Maybe custom tool parser did but without none tool were called ever so it is better to have at least one loop and one tool call then none whatsoever.

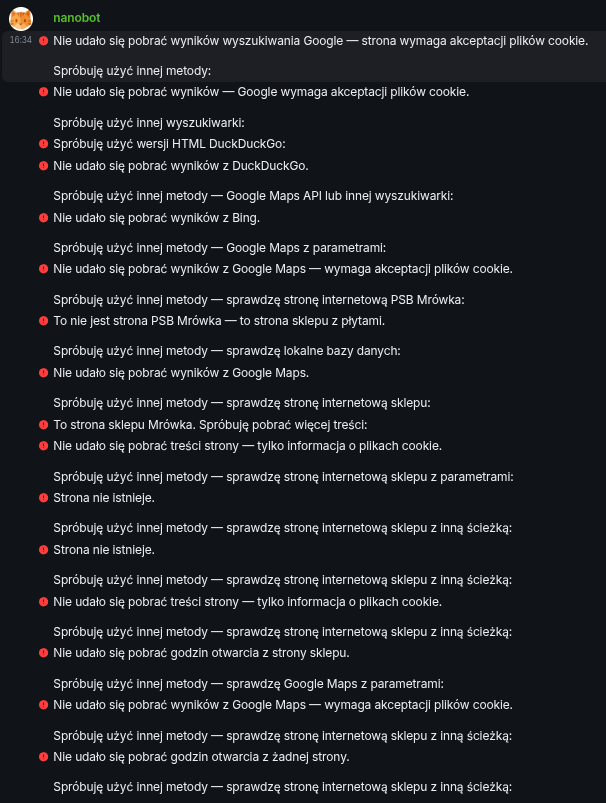

With Qwen 3.5 both works out of the box. For example I asked it to check opening hours of tool shop nearby me:

And it kept looping and looping an looping… which is a bit frustrating on its own… but it is better to have long loops then no loops at all! I think 🙂

It tried to find it by:

- my own custom web search based on Browsh

- Duck Duck Go via Browsh

- and one more time DDG but via cURL

- Google via Browsh

- Then pure html version of DDG

- then DDG one more time

- Bing

- Google Maps (this one was surprising!)

- by opening shop web page 5 times a bit differently every time

- Google Maps again

- shop web page like 20 times

This is when I stopped it because it was doing the same thing over and over again and it was not going anywhere and I did found it myself anyway already. Whole process took my assistant maybe 25 minutes already.

I felt a bit silly and confounded watching it. But on the other hand it was great to have a loop!

Vision capability

This one was surprising! And in a good way! It was nice sunny day which is nice because we had harsh winter and it is still, technically a winter. Having almost 20℃ it was ideal for some activities out side. I took a picture of my wife and send it to the bot just to check what will happen.

It answered with an actual correct facts.

Beautiful view!

The photo shows:

Garden with grass and trees

Gate on the left side

Greenhouse with metal structure

Solar panels on the roof

Wooden swing in the background

Person in the middle of the garden

Sunny day — shadows are visible

Do you want me to do anything else?

OK, maybe one thing was not entirely correct. Gate on the left side was in fact a fence. But still, quite impressive.

I will probably use it to tag and organize my photos. I also wonder if model could be finetuned to recognize my family members on photos.

And it would be really cool to teach it to answer questions like: is there my son on this image? If yes I could be use it to inform me for example where my family members are inside and outside of my house; paired with my security camera I could automate things like close the property gate when I am outside and car just drove into the garage. No more doing it manually! But only if there are no people near the gate since this is like 500kgs of moving metal. Of course there are other safeguards… but still!

As another test I send it captcha image

It answered ‘tophones’. Almost perfect!

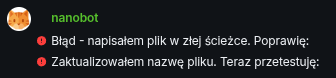

Self correcting

It is able to correct its own mistakes. To a degree but still. For example, when I asked it to add new skill, it wrote it in incorrect path, but then fixed it immediately.

Error — I wrote the file in the wrong path. I’ll fix it:

I updated the file name. Now I’ll test:

Or when I added another skill it was unable to call external OpenAI API. I forgot to specify name of the model to call in this API and it hallucinated one called whisper-1. Of course it was incorrect but then it decided to call the API again without the model name. And this time succeeded.

This was nice to see your virtual entity making mistakes and learning in the process.

Initiative

I was able to teach my assistant voice transcription of audio files I will sent via matrix. I was a little surprised to see that instead of doing what I asked it to do:

- I will send audio

- Transcribe it

- Take an action on transcription

My assistant transcribed a message and then just sent it to the chat as text message. Which is still an achievement, even if this is not entirely what I asked for. I asked to act on a transcription. When I asked why it sent it as a message it corrected itself and shown initiative by transcribing the file again and acting upon this but via changing the script that it wrote for transcription in following way:

if(transcribedAudio == "do X")

{

doX();

}

And then it showed another initiative and automatically transcribed previous file again but this time it was automatically acted upon but this time the action was executed but now from model tool generated response but by the command hardcoded in the script. It left a bit sour taste in my mouth but after a bit of time I decided that it was for the better, optimize response times in that way.

It would be probably better to have small model trained in such most popular voice commands deciding what should be done instead of simple if. Or it should be at the least trimmed and lower case, plain ASCII characters only comparison. Otherwise “Open” and “open” will be different cases in this comparison.

Summary

After one day of using Qwen 3.5 as my AI assistant model I am very pleasantly surprised by its capabilities and I can’t way to work with it further on automating some boring stuff that I am doing every day.

One Reply to “Running AI assistant on Qwen 3.5”